When talking about the Internet of Things (IoT), you quickly get into the discussion about device abstraction. As a software engineer, it is natural that you want to represent a physical switch as a boolean value and a sensor measurement as a simple number. It is hard to understand why every hardware manufacturer and every alliance comes up with yet another way to access their devices, when all there is to do is to send a few numbers across. In order to simplify this plethora of options, device abstraction layers were invented.

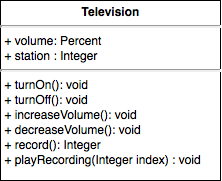

Actually, there is already a very common way to represent things (aka objects) in software: Object-oriented design! So let us create an abstract model of a television:

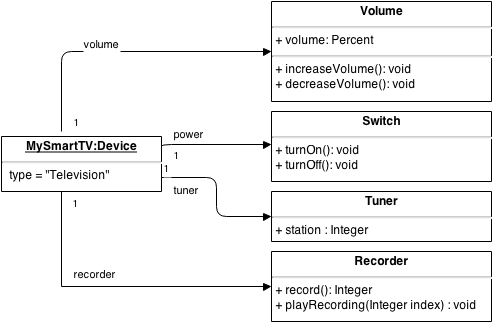

As a consequence, a more clever approach is to split devices into their functionalities in order to have reusable (and thus abstracted) parts. A device then becomes an object with a set of functions, often also called capabilities. Steven Posick wrote a very good blogpost about why capabilities-based programming makes a lot of sense for IoT. Let's see how our representation could look like that way:

This is already much better as the commonalities are defined on a finer-grained level. Still, another problem creeps in from the opposite direction as well: The primitive types, which build the "atoms" on which the device abstraction is based. Are the usual primitive types a good choice? A magnetic sensor status can be represented as true/false - or rather false/true? A TV station can be a simple integer. But which integer is then CNN and which ESPN? These questions suggest that there is too much ambiguity in such a model and hence the use of enumerations seemy to be a logical choice. On a closer look, we are now back at the same problem we had before: While modelling the functionality, such as the channel, as a primitive type (here integer), this is fine for more or less all devices. By introducing a channel list as an enumeration, this is all of a sudden very device-specific and hardly reusable for other TVs. This is the same effect for all other constraints on values: What is the frequency range of my tuner? Which step size does my thermostat support and what is its maximum value? All such constraints are very device-specific and hence should be treated as (specific) meta-data in the model.

A similar situation applies to units: Sensors provide values in a certain unit and this is often directly built into the hardware. Depending on their manufacturer and intended use case, a temperature sensor might deliver values as Kelvin, degrees Celsius or degrees Fahrenheit. It is therefore not a good idea to define the unit of a value on the abstraction layer - this would again reduce the possibility to find a generic "TemperatureSensor" abstraction or rather would introduce three different versions of it. The better choice is therefore to treat the unit as meta-data.

Contemplating about dimmers brings up yet another angle: Is it really the dimmer that the user or application is interested in? No, the dimmer is only a mean to change the light that is plugged into it. Likewise a magnetic contact is not in the focus itself - instead, it is the door where it is attached to. The temperature of a sensor is not interesting at all, but the temperature of the room it is in is. Ideally, we would like to work with concepts like "door", "room", "pet" in the application layer, not necessarily with the devices themselves. Such a collection of concepts is usually called an ontology. In an ontology, the relations between the concepts are described and lead to semantical meaning, through which implicit knowledge can be deduced.

For a device abstraction layer, such an ontology brings insurmountable obstacles: A device manufacturer simply cannot know about the concrete usage of the device and thus cannot provide the semantical classification. It is merely the installer or user who has the required knowledge. If he e.g. connects his fridge to a smart plug in order to measure its energy consumption, he clearly would not want it to be turned off by an "all-off" scenario. He therefore needs to teach the system about this, either explicitly or implicitly through his behavior. The latter means that the system can only learn through trial & error, which sounds nice when being called "self-learning" in marketing material, but which can lead to quite some frustration for the users - there is a great post on this by Johannes Ernst about the Nest thermostat. I fully agree that this is an easy hard problem.

Separating functions from the physical device also means that you might have to deal with multiple locations - if you want to "turn off all lights in your kitchen" then this should include the light that is controlled through the actuator which is located in your electrical cabinet. If this actuator has multiple channels, there is one physical location (the cabinet), but multiple functional locations. This again is an information that needs to be taught to the system. For the daily usage, the device and its location are hardly relevant, but the locations of the functions are.

Actually, there is already a very common way to represent things (aka objects) in software: Object-oriented design! So let us create an abstract model of a television:

Has OOD failed, again?

The obvious problem here is that this is indeed "a" television. But this class is not usable for all TV sets that are out there - old CRTs have hardly any functionality and modern smart TVs include a triple-tuner, hard-disc recording etc. So how should this single representation suit them all? Well, you could simply add all potential functionality of a TV to this class and declare most of them optional. But this effectively means that your application has to deal with NotImplementedExceptions for 90% of the called methods in the end - the application degenerates into an exception handling facility... Somehow this reminds me of the fact that I was never convinced that OOD is the right thing for todays problems.As a consequence, a more clever approach is to split devices into their functionalities in order to have reusable (and thus abstracted) parts. A device then becomes an object with a set of functions, often also called capabilities. Steven Posick wrote a very good blogpost about why capabilities-based programming makes a lot of sense for IoT. Let's see how our representation could look like that way:

This is already much better as the commonalities are defined on a finer-grained level. Still, another problem creeps in from the opposite direction as well: The primitive types, which build the "atoms" on which the device abstraction is based. Are the usual primitive types a good choice? A magnetic sensor status can be represented as true/false - or rather false/true? A TV station can be a simple integer. But which integer is then CNN and which ESPN? These questions suggest that there is too much ambiguity in such a model and hence the use of enumerations seemy to be a logical choice. On a closer look, we are now back at the same problem we had before: While modelling the functionality, such as the channel, as a primitive type (here integer), this is fine for more or less all devices. By introducing a channel list as an enumeration, this is all of a sudden very device-specific and hardly reusable for other TVs. This is the same effect for all other constraints on values: What is the frequency range of my tuner? Which step size does my thermostat support and what is its maximum value? All such constraints are very device-specific and hence should be treated as (specific) meta-data in the model.

A similar situation applies to units: Sensors provide values in a certain unit and this is often directly built into the hardware. Depending on their manufacturer and intended use case, a temperature sensor might deliver values as Kelvin, degrees Celsius or degrees Fahrenheit. It is therefore not a good idea to define the unit of a value on the abstraction layer - this would again reduce the possibility to find a generic "TemperatureSensor" abstraction or rather would introduce three different versions of it. The better choice is therefore to treat the unit as meta-data.

Configuration Hell

Very often, a huge part of a device' functionality is exclusively used for its configuration. The configuration options of a device are highly hardware-specific and thus hard to abstract. Treating configuration functionality separate from "operational" functionality is therefore useful. A good example is a "simple" dimmer: Its operational functionality is fairly easy: It has a state between 0 and 100 and this can be set through an operation. But in order to configure this behavior, there is a multitude of different options: Individual dimming curves can be defined depending on the type of bulb. How quickly should the new value be reached, i.e. is there a smooth transition? Is it at all ok to dim the connected device or must it only be switched on and off? Should it turn to 100% when being switched on or shall it remember the state before it had been turned off? Some dimmers actually come with 20 and more options for their configuration - a really strong reason for not trying to generalize this. |

| Dimmers from different manufacturers |

Contemplating about dimmers brings up yet another angle: Is it really the dimmer that the user or application is interested in? No, the dimmer is only a mean to change the light that is plugged into it. Likewise a magnetic contact is not in the focus itself - instead, it is the door where it is attached to. The temperature of a sensor is not interesting at all, but the temperature of the room it is in is. Ideally, we would like to work with concepts like "door", "room", "pet" in the application layer, not necessarily with the devices themselves. Such a collection of concepts is usually called an ontology. In an ontology, the relations between the concepts are described and lead to semantical meaning, through which implicit knowledge can be deduced.

For a device abstraction layer, such an ontology brings insurmountable obstacles: A device manufacturer simply cannot know about the concrete usage of the device and thus cannot provide the semantical classification. It is merely the installer or user who has the required knowledge. If he e.g. connects his fridge to a smart plug in order to measure its energy consumption, he clearly would not want it to be turned off by an "all-off" scenario. He therefore needs to teach the system about this, either explicitly or implicitly through his behavior. The latter means that the system can only learn through trial & error, which sounds nice when being called "self-learning" in marketing material, but which can lead to quite some frustration for the users - there is a great post on this by Johannes Ernst about the Nest thermostat. I fully agree that this is an easy hard problem.

Separating functions from the physical device also means that you might have to deal with multiple locations - if you want to "turn off all lights in your kitchen" then this should include the light that is controlled through the actuator which is located in your electrical cabinet. If this actuator has multiple channels, there is one physical location (the cabinet), but multiple functional locations. This again is an information that needs to be taught to the system. For the daily usage, the device and its location are hardly relevant, but the locations of the functions are.

Static Descriptions vs. Dynamic Behavior

Assuming you successfully managed to classify all your devices nicely and have a generalized description of them, a new problem comes up once you start using your system: The dynamic runtime behavior. A static description can quickly render useless when devices are operated:- Changing the mode of your heating system between "manual" and "automatic" will radically change the set of available options for the current mode. So how could one describe availability of functions and options that depend on certain values of other functions?

- Sensor values can be pulled or pushed - how often does this happen? Is pushing done at a fixed rate or only upon a change in the value (and with which threshold)?

- When sending a command to a device, is this directly transferred or will it take a while as the device might be in a (battery-optimized) sleep mode? How long does the operation take to be fully completed?

- What error situations can occur and how can this be determined? Are there recovery options?

- Are state changes described through a state machine? Is there maybe even the need for transaction support?

All of this would be nice to know for an application - but at the same time, this all is very device specific and cannot be abstracted away. In consequence, applications will either be simplified, i.e. they cannot cover every single detail and situation in an optimal manner or they are device specific. Usually one will end up somewhere in the middle. In practice, almost all so-called device abstraction layers are in reality device access abstraction layers, i.e. they provide the same API for accessing different kinds of devices, but they do not abstract their functionality. Even describing the pure hardware-related characteristics in a good - and common - way is already very hard. The European Commission is currently running a study about ontologies for smart appliances with the goal to distill the commonalities into a single ontology - the result will be surely interesting.

So how does Eclipse SmartHome actually deal with all those complex problems?

As the initial code base came from the openHAB project, there is a strong focus on a high functional abstraction level. In openHAB, one of the main concept is that of an item, where an item represents a specific function. Items are on the "virtual" or "modelled" layer of the system architecture and thus they can exist independently of any real hardware. An important design principle is that it should be possible to replace hardware without having to do any changes to the items and thus the application level, which is the user interface and the automation rules. The item types are very limited and partially rather abstract/primitive like "Number" or "String", in some cases more concrete and specific for the smart home domain like "Rollershutter" or "Color" - the set of item types is kept small and new additions are thoroughly discussed. In general, items are meant to be useful on the application level, but the true semantics are usually brought into the system by the user as he designs the UI and the automation rules in a way that they support his use cases.

openHAB 1.x actually completely does without the notion of a device - instead, items are directly bound to a certain functionality of a device through an appropriate configuration. This was possible for openHAB as it concentrated on the operational side and did not provide any (runtime) functionality for system setup and configuration.

In Eclipse SmartHome, configuration facilities are a major focus for new contributions. For this reason, the thing concept has been introduced as a complement to the item concept. It does not only allow describing the functionality and structure of things in a common way, but also provides relevant information to build user interfaces on top, including internationalization support. Once the new Eclipse Vorto project is in place, its repository can be a good source for adding additional device support into bindings by generating the appropriate thing type descriptors.

The static way of describing things through XML is just one (default) possibility of providing thing types in Eclipse SmartHome though - the framework allows dynamic providers at the same time, so that the structure of things can also be provided dynamically at runtime. The name "thing" was chosen over "device" in order to allow "virtual" things, such as web services or an arbitrary source of information, as well. It is important to note that so far there is no thing taxonomy (i.e. a classification system) in Eclipse SmartHome yet. Different solutions built on Eclipse SmartHome might use different taxonomies - for this reason, this has not been addressed so far.

Tagging to the Rescue

A more powerful concept than a simple taxonomy is currently in implementation: Tagging support. The basic idea is to provide a very simple mean to add semantical information to items, so that they can be easily consumed by applications - tags could be e.g. something like "light" or "alarm", i.e. their definition should be use case driven and not device driven. Thing type descriptions can "suggest" default tags for items that are linked to their channel, but the user should have the chance to change the tags on an item in order to provide semantical information that reflects his personal usage of things. Although it is a simple concept, I am convinced that it can support many nice use cases without adding too much burden on developers - when targeting a developer community, the "power by simplicity" principle is very important to adopt.

I'd love to hear about your experiences and ideas on this topic - feel free to join the discussion!